Cloud Security Mapping

Connecting Hava to your AWS and Azure accounts generates interactive cloud security mapping diagrams your team can use to review your cloud security...

AWS are always quick to point out what they refer to as the shared responsibility security model.

This defines what AWS are responsible for and what you need to take care of.

AWS look after the physical security of their data centres, their global infrastructure and availability zones and the foundational cloud infrastructure such as compute, storage, databases and networking, the links between regions and edge locations. Everything from the physical hardware and software down to the ability to enter bricks and mortar data center buildings..

You as a customer are responsible for the resources you deploy in the cloud like operating systems, network and firewall settings, platform, application and identity and access management configuration, client and server side encryption and network traffic protection.

You set up the security that allows or denies access to your data, compute instances and applications. Your security team control access to your virtual networks and you are responsible for patching applications and operating systems deployed in your AWS account.

AWS uses identities as a way to control who has access to which resources running on AWS.

When you create an AWS account, you will become the default “Root” user that has access to everything created in the AWS account. This owner account identity has the ability to perform all operations within the account, to create, access and delete everything.

AWS recommends that as soon as you create a new AWS account that you enable two factor authentication.

This means that should someone discover your root username and password, they will still need the device that’s authorised to generate the 2FA codes for your AWS account and without that they will be unable to log in with root permissions and wreak havoc.

Identity and Access management (IAM) is the mechanism by which you grant access to your systems. You create IAM users that you want to grant access to, however by default a new IAM user has zero permissions for your account. They cannot create an new EC2 instance for example or even place objects in an S3 bucket.

You have to explicitly grant a new IAM user permissions to be able to do anything. This principle of least privilege denies access to everything at the start and then you use an IAM policy to specifically grant permissions for each service a user needs access to, like read only S3 access or read/write access.

The IAM policy is a JSON document that describes what API calls a user can or cannot make using declarative Allow or Deny statements.

For example the following:

{

“Version”: “2012-10-17”,

“Statement”: {

“Effect”: “Allow”,

“Action”: “s3:ListBucket”,

“Resource”: “arn:aws:s3:::MyS3Bucket”

}

}

This would give the user the permission to list the contents of an S3 bucket named “MyS3Bucket”.

The user wouldn’t have permission to read from or write to objects in the bucket, purely list the contents.

The Effect setting is either Allow or Deny.

The Action is the name of the API call.

The resource is the ARN name of the AWS resource.

It could get quite overwhelming applying individual permissions for every user, so in reality you are more likely to create IAM groups. You then place users in an IAM group and attach a policy to that group. All users in the IAM group inherit the permissions allocated to the IAM group.

IAM Roles are another mechanism for assigning permissions to users.

Instead of attaching policies to individual users, you can create a role and attach IAM policies to that role. A role is designed to be temporary in nature. When a user assumes a role, the role permissions replace the user’s normal permissions for the duration the role is in play.

Other AWS Services and applications can assume roles to gain the permissions they require.

You can also federate other corporate sign in identities with IAM roles, so there is technically no requirement to create an AWS user but you are still able to grant permission to access AWS resources in your account to validated users in your organization using your corporate network log in credentials.

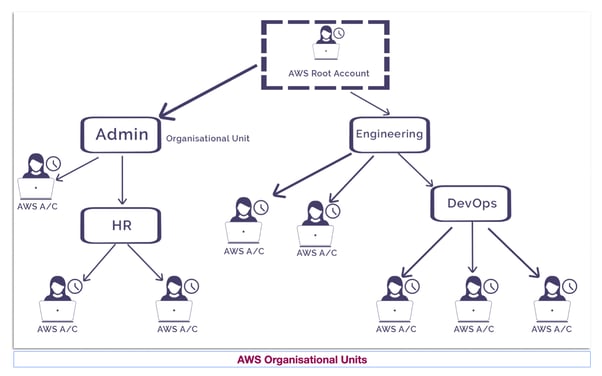

When you first start out with AWS you will most likely have a single account where everything resides. As you grow and develop the need to keep some systems separate like accounting, development or geographically separate business units the chances are you will create more AWS accounts to handle the separation.

As the number of AWS accounts grows, so does the complexity of keeping on top of billing, security permissions and ensuring the services you have running in each AWS account are needed and sized appropriately. It is good practice to separate business units and functions so that if you are introducing changes in one area, they are not adversely affecting another.

Also keeping data separate means business units only get access to the information they need. Your accounts department probably doesn’t need access to software development tools, so it makes sense to keep them separate.

AWS Organisations allows you to construct orderly management over multiple AWS accounts by pulling AWS accounts into a central location.

With AWS Organizations you can manage billing, access, security and compliance across multiple aws accounts from one place. You can consolidate billing for your organization which pulls in the costs across all AWS accounts in the org onto one primary account. This may result in bulk discounts based on usage across the entire organization.

Accounts can be placed into hierarchical groupings called organisational units (OUs) where similar accounts are categorised, like say developers. You can then define Service Control Policies (SCPs) that defines exactly what resources, services and API actions OU members can access. Think of it like an overarching IAM policy document that gets attached to member AWS accounts as they are added to an OU.

Depending on your location and industry, you may be subject to compliance audits. These requirements are specific to your business. If you are in an EU member state and store consumer data then you are subject to GDPR regulations. If you run healthcare applications in the US then you are obligated to comply to HIPAA

AWS has built their data center, infrastructure and networking to comply with a vast range of regulatory requirements and as an AWS customer you inherit that compliance. However it is up to you to ensure what you build and where you build it also meets any regulatory compliance your individual business is subject to.

If your data needs to be stored in a particular geographic location to meet compliance, you can select the appropriate AWS region to host your application data.

You can request AWS provide proof of compliance and best practice for the region or availability zones that host your data and you can user the AWS Artifact service to request copies of third party reports that validate a wide range of compliance protocols.

The AWS Customer Compliance Center has more information, case studies and white papers

When someone launches a distributed denial of service attack on your network the scale of AWS is working in your favour.

Say for instance someone is sending a flood of UDP traffic to try and clog up your EC2 instances, the security groups protecting your VPCs at the perimeter will detect that the traffic is not normal customer activity and block it before it reaches your EC2 instances hosting your application. Because of the vast scale of the AWS infrastructure and security group layers, such an attack can be absorbed and blocked at the network level without impacting your production systems.

Elastic load balancing is also useful for mitigating the attempt to slow down front end requests so genuine users have to queue to make server requests. An attacker would have to overload an entire AWS region in order to clog up your network that has the ability to scale. While technically possible it would take a vast amount of resources to accomplish.

So AWS already has major steps in place to prevent DDoS attacks, however you can also deploy AWS Shield with WAF that uses machine learning to identify new threats and handle them as they are happening.

AWS Shield standard automatically protects AWS customer networks from the most common and regularly occurring DDoS attacks at no cost.

AWS Shield Advanced is a paid service that detects and mitigates complex DDoS attacks which integrates with Cloudfront, Route53 and Elastic Load Balancing.

That is a summary of the topics covered in the AWS Cloud Practitioner Essentials security module.

Other posts in this essential series include

Compute : https://www.hava.io/blog/aws-cloud-practitioner-essentials-compute

Global Infrastructure : https://www.hava.io/blog/aws-cloud-practitioner-essentials-global-infrastructure-and-reliability

Networking : https://www.hava.io/blog/aws-cloud-practitioner-essentials-networking

Storage and Databases : https://www.hava.io/blog/aws-cloud-practitioner-essentials-storage-and-databases

Next up we'll be covering Monitoring and Analytics.

Talking of monitoring, how are you monitoring what you have running in your cloud accounts? Bu connecting your AWS, Azure or GCP cloud accounts to hava.io you can auto generate network topology diagrams showing you exactly what resources you have configured and running.

Once you generate your diagrams they stay up to date automatically, hands free. No need to manually trigger updates or drag and drop anything. You can use the Hava API or integrations like the Terraform and Github actions to build documentation directly into your build pipelines.

You can take the fully featured Hava teams plans for a 14 day free trial to test everything out and you can even continue to use Hava for free with a single data source after the trial, or of course move forward with a paid plan to automate the documentation of multiple cloud accounts.

Connecting Hava to your AWS and Azure accounts generates interactive cloud security mapping diagrams your team can use to review your cloud security...

What is Amazon Detective? In this post we take a look at what Detective is, what are it's dependencies, how it can help with your AWS security...

Cloud Security is top of mind for anyone building solutions in the Public Cloud. We take a look at Amazon Security Fundamentals and how Hava can help.